[4] Użycie systemu plików Ceph Nautilus

7 lipca 2020konfigurujemy teraz klienta/hosta [lclt01] do użycia zasobów dyskowych Ceph.

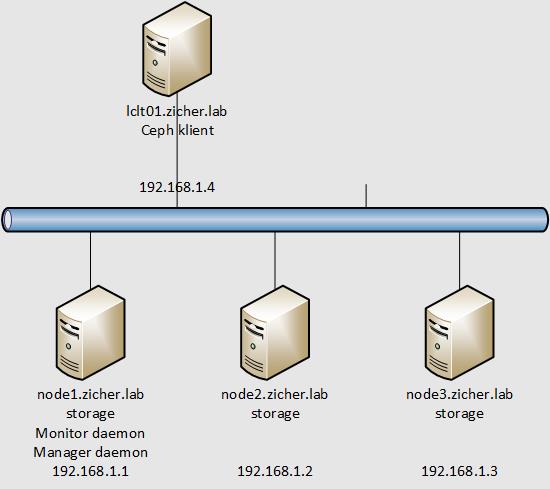

Sieć przedstawia się następująco.

W tym przykładzie zamontujemy zasoby dyskowe i zamontujemy je jako system plików na kliencie/hoście.

[1] Przetransferuj klucz publiczny za pomocą SSH i skonfiguruj klienta z [Admin Node].

# transfer klucza [root@node1 ~]# ssh-copy-id lclt01 /usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/root/.ssh/id_rsa.pub" The authenticity of host 'lclt01 (192.168.1.4)' can't be established. ECDSA key fingerprint is SHA256:t4KMpxJTwPeEikCQ/ronIzH6TuXILoT6W98pB/Be18Q. Are you sure you want to continue connecting (yes/no/[fingerprint])? yes /usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed /usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys root@lclt01's password: # wpisz hasło root'a z lclt01 Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'lclt01'" and check to make sure that only the key(s) you wanted were added. # instalacja pakietów [root@node1 ~]# ssh lclt01 "dnf -y install centos-release-ceph-nautilus" [root@node1 ~]# ssh lclt01 "dnf -y install ceph-fuse" # transfer potrzebnych plików do hosta/klienta [root@node1 ~]# scp /etc/ceph/ceph.conf lclt01:/etc/ceph/ ceph.conf 100% 280 564.3KB/s 00:00 [root@node1 ~]# scp /etc/ceph/ceph.client.admin.keyring lclt01:/etc/ceph/ ceph.client.admin.keyring 100% 151 426.2KB/s 00:00 [root@node1 ~]# ssh lclt01 "chown ceph. /etc/ceph/ceph.*"

[2] Skonfiguruj MDS (MetaData Server) na węźle. Tutaj wykonamy te czynności na węźle [node1].

# stwórz katalog # nazwa katalogu => (Cluster Name)-(Node Name) [root@node1 ~]# mkdir -p /var/lib/ceph/mds/ceph-node1 [root@node1 ~]# ceph-authtool --create-keyring /var/lib/ceph/mds/ceph-node1/keyring --gen-key -n mds.node1 creating /var/lib/ceph/mds/ceph-node1/keyring [root@node1 ~]# chown -R ceph. /var/lib/ceph/mds/ceph-node1 [root@node1 ~]# ceph auth add mds.node1 osd "allow rwx" mds "allow" mon "allow profile mds" -i /var/lib/ceph/mds/ceph-node1/keyring added key for mds.node1 [root@node1 ~]# systemctl enable --now ceph-mds@node1 Created symlink /etc/systemd/system/ceph-mds.target.wants/ceph-mds@node1.service → /usr/lib/systemd/system/ceph-mds@.service.

[3] Stwórz 2 pule RADOS dla Data i MetaData na węźle MDS. Więcej informacji oraz oficjalne dokumenty odnośnie numeru zakończenia tutaj: http://docs.ceph.com/docs/master/rados/operations/placement-groups/ (my użyjemy 64).

[root@node1 ~]# ceph osd pool create cephfs_data 64

pool 'cephfs_data' created

[root@node1 ~]# ceph osd pool create cephfs_metadata 64

pool 'cephfs_metadata' created

[root@node1 ~]# ceph fs new cephfs cephfs_metadata cephfs_data

new fs with metadata pool 4 and data pool 3

[root@node1 ~]# ceph fs ls

name: cephfs, metadata pool: cephfs_metadata, data pools: [cephfs_data ]

[root@node1 ~]# ceph mds stat

cephfs:1 {0=node1=up:active}

[root@node1 ~]# ceph fs status cephfs

cephfs - 0 clients

======

+------+--------+-------+---------------+-------+-------+

| Rank | State | MDS | Activity | dns | inos |

+------+--------+-------+---------------+-------+-------+

| 0 | active | node1 | Reqs: 0 /s | 10 | 13 |

+------+--------+-------+---------------+-------+-------+

+-----------------+----------+-------+-------+

| Pool | type | used | avail |

+-----------------+----------+-------+-------+

| cephfs_metadata | metadata | 1536k | 14.1G |

| cephfs_data | data | 0 | 14.1G |

+-----------------+----------+-------+-------+

+-------------+

| Standby MDS |

+-------------+

+-------------+

MDS version: ceph version 14.2.9 (581f22da52345dba46ee232b73b990f06029a2a0) nautilus (stable)

[4] Montujemy teraz system plików Ceph na kliencie/hoście.

# Base64 kodowanie klucza klienta

[root@lclt01 ~]# ceph-authtool -p /etc/ceph/ceph.client.admin.keyring > admin.key

[root@lclt01 ~]# chmod 600 admin.key

[root@lclt01 ~]# mount -t ceph node1.zicher.lab:6789:/ /mnt -o name=admin,secretfile=admin.key

[root@lclt01 ~]# df -hT

System plików Typ rozm. użyte dost. %uż. zamont. na

devtmpfs devtmpfs 966M 0 966M 0% /dev

tmpfs tmpfs 994M 0 994M 0% /dev/shm

tmpfs tmpfs 994M 9,3M 985M 1% /run

tmpfs tmpfs 994M 0 994M 0% /sys/fs/cgroup

/dev/mapper/cl_lclt01vm-root xfs 17G 5,4G 12G 33% /

/dev/sda2 ext4 976M 202M 708M 23% /boot

/dev/sda1 vfat 599M 6,8M 593M 2% /boot/efi

tmpfs tmpfs 199M 1,2M 198M 1% /run/user/42

tmpfs tmpfs 199M 4,0K 199M 1% /run/user/0

192.168.1.1:6789:/ ceph 15G 0 15G 0% /mnt